Three things your eyes can’t see that cameras can

Both our eyes, and digital cameras, are imperfect creations that only see a part of the world around us. What they see isn’t always the same, in some instances, a camera makes the invisible visible to us. Here are three things your eyes can’t see that cameras can.

1. Infra-Red Pollution

Let’s start with an easy one.

Why can a camera see the red light on your TV remote, but you can’t?

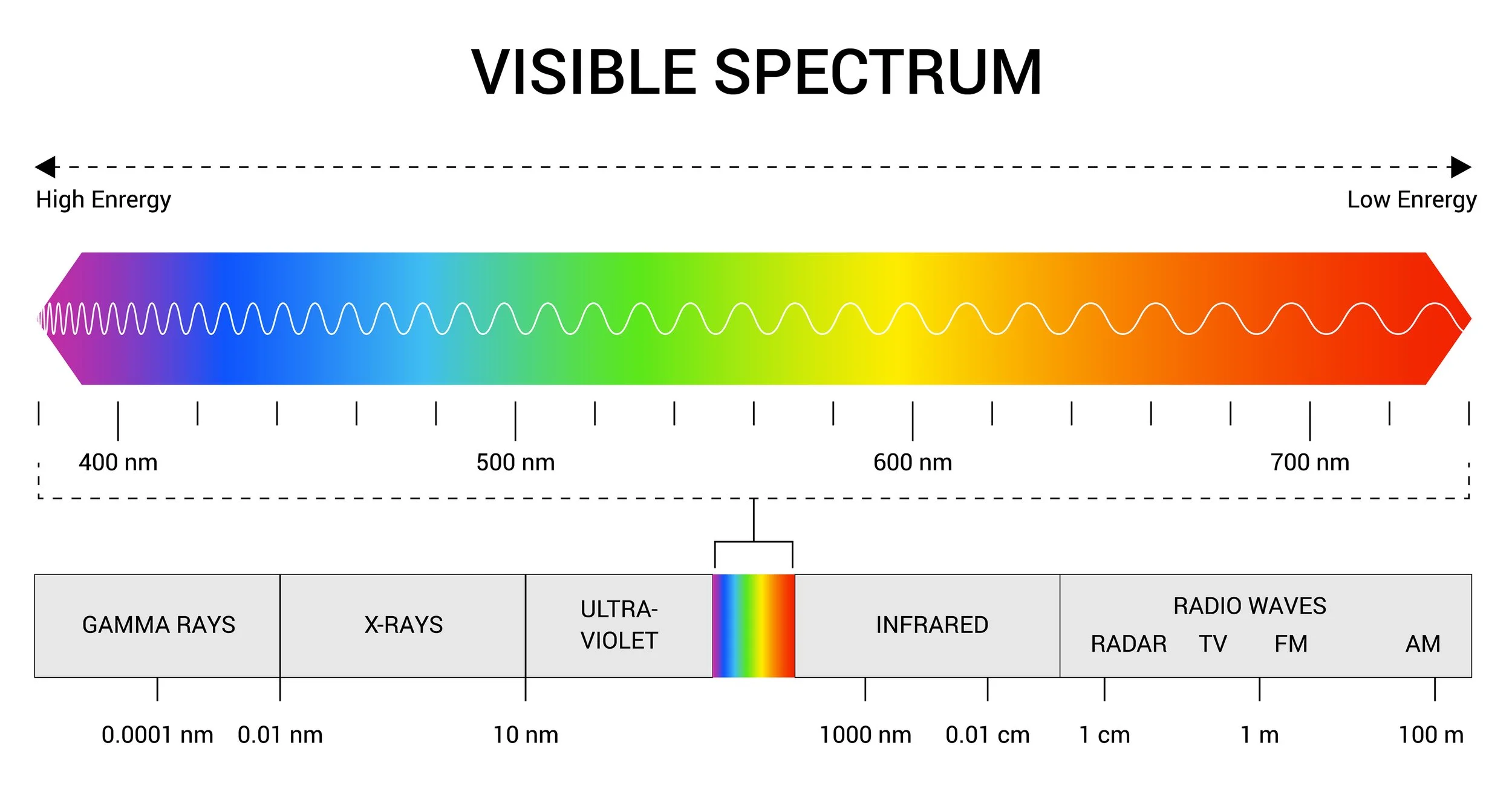

Visible light sits in a pretty narrow slice of the electromagnetic spectrum; wavelengths between about 400 and 700 nanometres. Red light is at the long end of that range, and once a wavelength creeps past 700nm, it tips over into infra-red and becomes invisible to us.

The eyes of many animals, and the sensors on digital cameras, detect wavelengths outside the range visible to humans; including around 700–730nm, infra-red. In most environments this doesn’t matter, as IR light is far less intense than visible light. But when shooting with neutral density, ND, filters, which evenly block visible light; the IR light becomes relatively more intense, and the camera reads this as a colour shift towards red.

Most high-quality ND filters are IR blocking to counter this effect. Your TV remote uses a very intense IR light to transmit information, which your camera can see thanks to its IR sensitivity, even in a normal environment.

So what does absolute temperature have to do with colour? When we relate this back to the vocabulary we use, it doesn’t make sense? How did filmmakers start referring to higher Kelvin values as colder, and lower Kelvin values as hotter?

2. Flickering & Banding

You’re filming at an indoor location, block your shot, frame up, film, review the take - then you spot it. That led light or TV screen in the back of the shot is flickering, or, even worse, weird black banding is appearing moving up and down the entire screen.

Most LEDs aren’t continuously lit when ‘on’ — they flicker rapidly with minor fluctuations in voltage, only illuminating when the supply hits the right threshold. Just as our eyes perceive a fast series of frames as smooth motion in video, we perceive this flickering as a constant light source. The camera isn’t perceiving something we can’t — it’s just catching some frames at the wrong moment, when the LED isn’t illuminated.

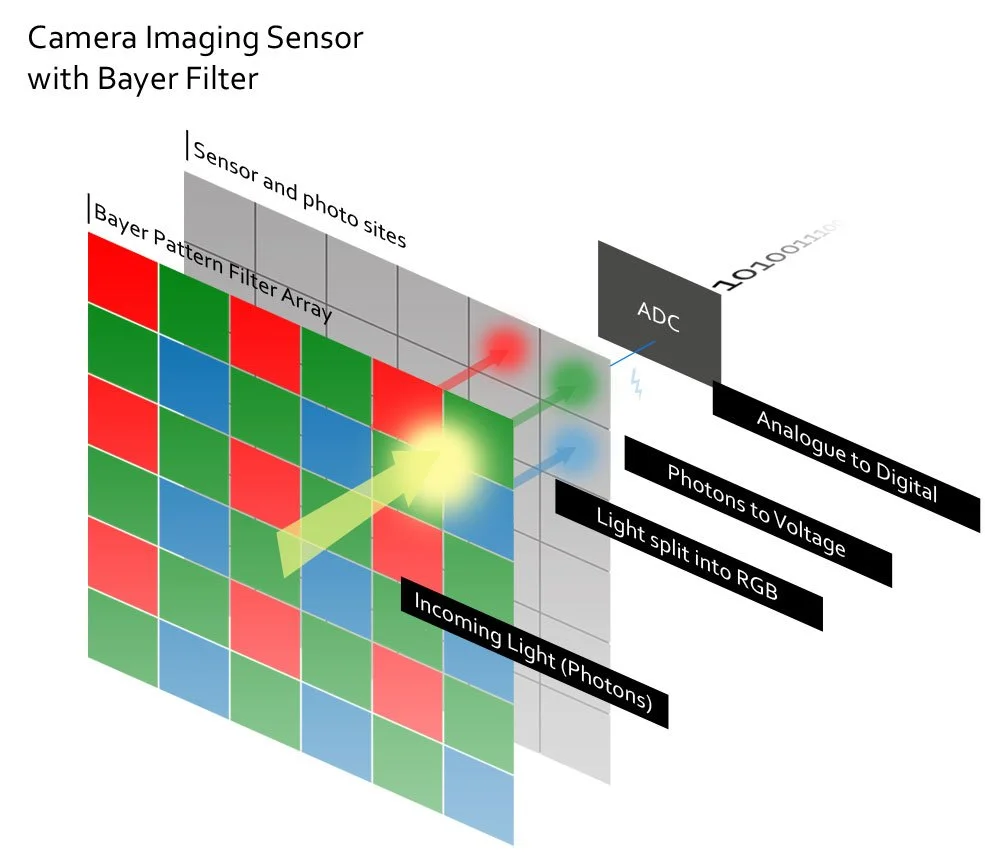

Banding is another symptom of the same problem, this time caused by the sensor reading its pixels from top to bottom, and a light source flickering on and off while this process is happening causes bands of light and dark appear in the frame.

Solutions to this include adjusting the shutter angle or framerate on your camera until the flickering vanishes (synchronising the camera to the frequency of the flickering), or bringing your own purpose-built film lighting to the location. DaVinci Resolve has a handy ‘deflicker’ effect that can use temporal interpolation to solve most instances of flickering.

3. Moiré

Experienced filmmakers know that if you point your camera at a low pixel-pitch screen or a fine repeating pattern, a strange wavy artefact appears.

This Moiré pattern gets its name from a textile that exhibits the same wavy watered appearance. The human eye can see Moiré patterns in textiles; and overlapping, but slightly misaligned, regular patterns. It’s a manifestation of wave interference, which plays a role in both optics and acoustics. When two peaks or two troughs of interfering waves align, they combine and increase in amplitude, when a peak and trough align, they cancel each other out.

Digital cameras see Moiré when we don’t on screens or fine-patterns because they are part of the cause. The pixels on the screen are in a regular pattern, as are the photodetectors on a digital camera sensor — unless the two are perfectly aligned and matched in resolution, Moiré will appear in the final image. This doesn’t happen in the human eye because our ‘photodetectors’ are arranged completely randomly. On camera, the solution is an optical low-pass filter, which very slightly blurs the image to break up that regularity before it hits the sensor. Moiré is a bit of a rabbit hole because it has a very fundamental physical cause, it can also occur when downscaling footage or looking at an image of a regular pattern on a screen, see this ProAV video and this Tom Scott video for more.

These examples are a mixture of cameras seeing something very real that our eyes can’t, and anomalies caused by their design. IR pollution is the former, Moiré is the latter, and flickering is somewhat both. What this blog, along with our previous entry on colour temperature, is getting at, is that when you look into the science behind these anomalies, we realise that the slice of the world we have evolved to see, is very narrow.